Andrea Miotti @_andreamiotti

Trying to make the future go well. executive director @ai_ctrl, advisor @ConjectureAI andreamiotti.substack.com Joined April 2020-

Tweets1K

-

Followers982

-

Following296

-

Likes7K

Lord Clement-Jones argued for supply chain regulation on deepfakes, and recognised our deepfake campaign at last night's Lords Committee Debate. "I also pay tribute to ControlAI, who are vigorously campaigning on this subject in terms of the supply chain for the creation of…

The new CEO of Microsoft AI, @mustafasuleyman, with a $100B budget at TED: "AI is a new digital species." "To avoid existential risk, we should avoid: 1) Autonomy 2) Recursive self-improvement 3) Self-replication We have a good 5 to 10 years before we'll have to confront this."

*has one drink* guys did u know that the recently appointed head of safety at the US government’s AI safety institute thinks there’s a 50% chance AI will kill everyone on earth, isn’t that so so crazy

*has one drink* guys did u know that the recently appointed head of safety at the US government’s AI safety institute thinks there’s a 50% chance AI will kill everyone on earth, isn’t that so so crazy

Positive development in the UK on harmful deepfakes, but as Andrea notes, measures should be put in place that apply across the deepfake supply chain, including to model providers.

Positive development in the UK on harmful deepfakes, but as Andrea notes, measures should be put in place that apply across the deepfake supply chain, including to model providers.

There may be no person I know doing better work in the AI regulation space than my friend Andrea, take a look at this latest interview with him on the incredible work @ai_ctrl has been doing around banning deepfakes!

There may be no person I know doing better work in the AI regulation space than my friend Andrea, take a look at this latest interview with him on the incredible work @ai_ctrl has been doing around banning deepfakes!

I talked with @ElliePittTV on @ITV yesterday about the UK's new measures on deepfakes. For months we've been campaigning to ban the creation of deepfakes - now the government has acted with establishing a new criminal offence. But the work isn't done. To stop deepfakes,…

The new Head of AI Safety for the US government's AI Safety Institute believes that superintelligent artificial intelligence is the single most likely reason that he (and by extension all of us) will die. Those in the know know how dangerous this tech is. So why aren't we…

The new Head of AI Safety for the US government's AI Safety Institute believes that superintelligent artificial intelligence is the single most likely reason that he (and by extension all of us) will die. Those in the know know how dangerous this tech is. So why aren't we…

This is extremely welcome. However, it won't tackle the upstream enablers of deepfakes: the companies and nudification apps that enable explicit deepfakes to be created and accessed. @ai_ctrl is calling for requirements on cloud service providers to ensure their tools are not…

New long timelines just dropped: artificial superintelligence >1 year away.

New long timelines just dropped: artificial superintelligence >1 year away.

It’s time for the next chapter. AI Seoul Summit | AI 서울 정상회의 🇬🇧 🇰🇷 Find out more: gov.uk/government/new…

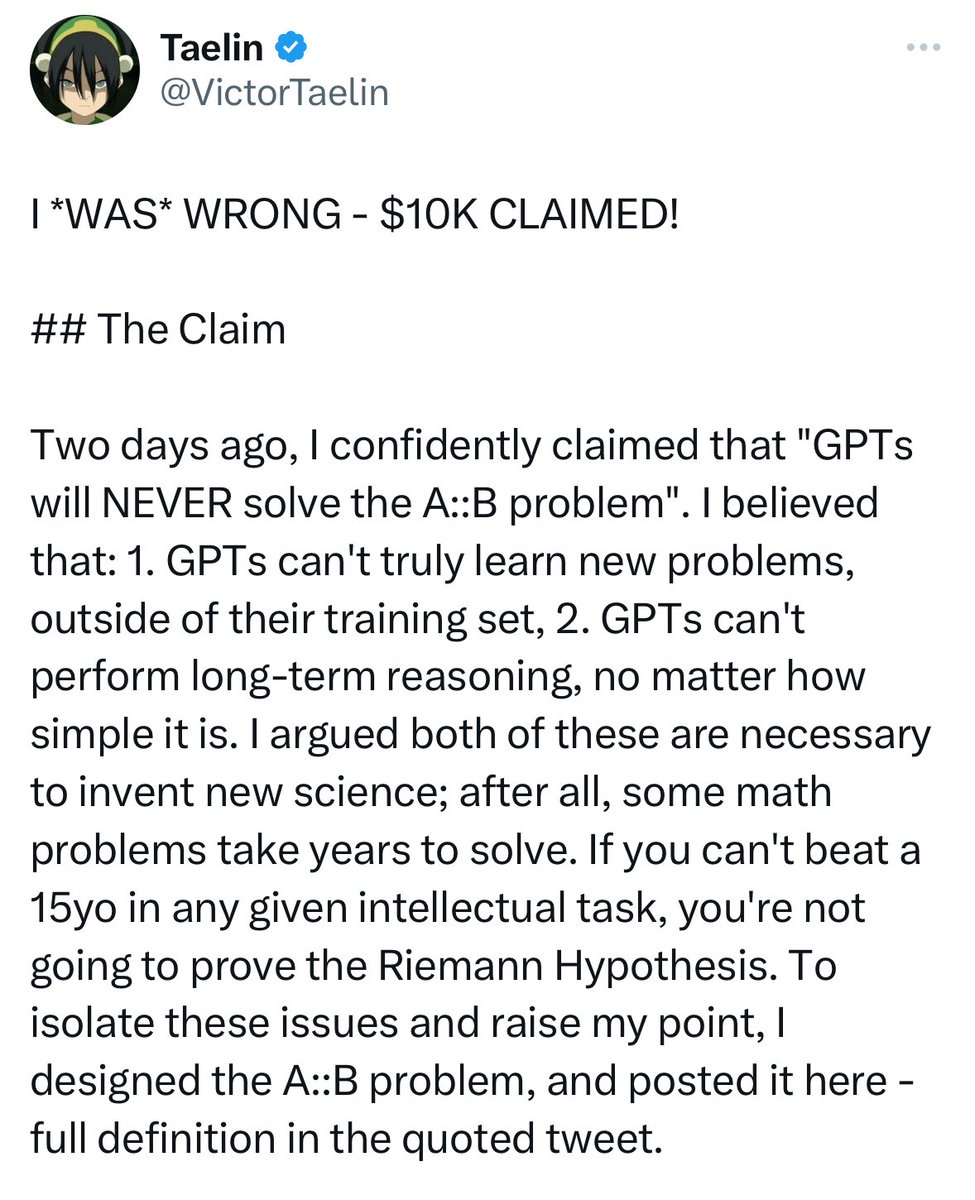

> “GPTs will NEVER solve this simple puzzle” > issues $10k challenge to be proven wrong > proven wrong in less than 24 hours

@ESYudkowsky while computers may excel at soft skills like creativity and emotional understanding, they will never match human ability at dispassionate, mechanical reasoning

There are only two times to react to an exponential: too early, or too late.

There are only two times to react to an exponential: too early, or too late.

A reminder that information processing, whether in silicon or in biological matter, is a physical process.

A reminder that information processing, whether in silicon or in biological matter, is a physical process.

i mean i called it "machine learning" until it started talking to me and then i thought it was fair to say "ai"

i mean i called it "machine learning" until it started talking to me and then i thought it was fair to say "ai"

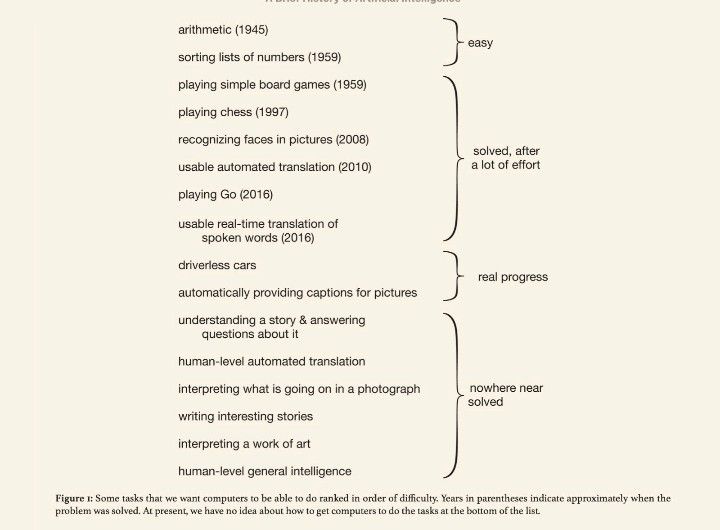

Reminder: a list of "Nowhere near solved" issues in AI, from "A brief history of AI", published in January 2021.

The spice keeps flowing

Furthermore, we are first and foremost an asteroid mining *safety* company. That is why we need to race as quickly as possible to be at the forefront of asteroid redirection, so more dangerous companies don’t get there before us.

Many of our capabilities in bits (institutions, coordination) also decay.

Many of our capabilities in bits (institutions, coordination) also decay.

I'm really enjoying this author's annotated version of "A Fire Upon the Deep": 3e.org/vvannot/00.html Learning some useful things about writing from Vinge's inline comments to himself about problems, dialogue with his editor, and so on Here's Vinge diagnosing and fixing a…

Eric Challender @2showme

134 Followers 314 Following The belief in a thing makes it happen. - Frank Lloyd Wright

Khongcanhoi Huy @Khongcanho45309

12 Followers 16 Following

Hauke Hillebrandt @Ha_uke

325 Followers 993 Following Former researcher at @Harvard @UCL @CGDev @CentreforEA and Founder of https://t.co/hgikUchI9b https://t.co/RjW7rjb4IC

Merhawi @Merhawi35313169

49 Followers 152 Following

Elyon @ElyonTarut

8 Followers 388 Following Technomancer | Hermetic Magician Forgive and 🌹✝️ Love Yourself From %00 Return to %00

Skip Intro | LAB - La.. @skipintrolab

9 Followers 133 Following Laboratorio de experimentación de IA para el sector audiovisual y del videojuego. Academia Skip Intro

baobab1b @Aalalalal111

76 Followers 294 Following

Grant Lenaarts @GrantLenaarts

1K Followers 5K Following https://t.co/oysTF0skvN The Age Of Decision. contributionism, a new ethic.

Jeff Thomas Black @LRBitisnot

29K Followers 31K Following #Economics, #CivilRights, #AI, #DukeMBA, #Author, "Full Dashclosure, Awakening from the human exploitation of DoorDash Singularity"

Fabian Schlupp @fabian_schlupp

17 Followers 337 Following Rather primitive version of a continuous learning model. Interested in AI research, AI applications, AI governance, AI… you get the idea

John Daniels @JustAnAlgorithm

115 Followers 702 Following Software Eng with an interest in all things technology. In the simulation running sims. Life GTO - "However vast the darkness, we must supply our own light"

MLȘaman aka Andrei �.. @andreinot

381 Followers 2K Following ¦ machine learning engineer ¦ quasi polymath ¦ father ¦ ~entrepreneur ¦ preppin to surf the intelligence explosion shockwaves & help others not drown too ¦

Kristofoletti @jokristo

319 Followers 395 Following

M.S. Jackson @TheOracleM

1K Followers 3K Following Tweets mostly related to my live-action #AI 'SuperEntity' movie project. Storyboard-filmscript on website. Enjoy! #SciFi #Singularity #TheOracleMachine

Yashvardhan Sharma @yashvardhan_07

95 Followers 227 Following worried about the race to building AGI

Juanjo do Olmo - .. @Claxterix

490 Followers 2K Following Please don't take my retweets too serious. - Twitter is a social experiment 😇. Medical AI Researcher @foundation29feb / BSc Pharma / AI from @iia_es

🌞🌻🌵🌱🌿�.. @crossslide

661 Followers 4K Following Amethyst, orchid, mulberry, midnight blue, navy blue, electric blue, sapphire, turquoise, cyan, aqua, olive green, pine green, sea green. 41 years old.

Szikszai Maria @twszm

29 Followers 44 Following lecturer, PhD, Babes-Bolyai University anthropology, ethnography

Tyler @tylertracy321

282 Followers 727 Following Typescript / Rust dev. Agent foundations @ AI safety camp. Ex Google. FOSS. Trying to stop AI take over the world

Jon Hernandez @thekubestudio

678 Followers 108 Following Divulgador de Inteligencia artificial y fotógrafo

Deranged Sloth @deranged_sloth

102 Followers 318 Following farting around in this grand and mysterious universe p(doom)=📎

🔨 @Justice_fikri

756 Followers 2K Following

Bad idea haver @ScreamingCow

55 Followers 620 Following

Mélange Caracole @MelangeCaracole

41 Followers 4K Following 'I am one of those smaller scale disturbances, with an attitude' -- elspethc

Konstantin ⏸️ @kammthal

108 Followers 600 Following

Lucas @lucas_prie

6 Followers 314 Following

T-cell, PhD @Tcell_bodyshot

223 Followers 668 Following Aristotelian lymphocyte. Entrenched disputes about the causes of human affairs are disguised moral conflicts that will not be resolved by objective data alone.

Dona Bayesiana @donabayesiana

92 Followers 2K Following Computação, Teoria do Caos, música pesada. Acho que meu hobby é ter filhos, pois tenho 3.

ClockworkRelativity @Clockrelativity

219 Followers 829 Following undergraduate AI nerd. likes AI governance. Tried applying for @OpenAI twice. breaker of image models #actuallyautistic ✡️ Jonah 1:9 Founder of @ClockworkAI03

Eric @aporeticaxis

145 Followers 802 Following here for memes and alpha / 'Quaerendo, non certitudinem peto, sed congressum cum ineffabili'

Riccardo Varenna @RiccardoVarenna

92 Followers 34 Following ⌨️ Chief of Staff @ https://t.co/gSV3YFeUPd 🌱Plant based

Horizon Events @HorizonEvents9

12 Followers 270 Following Events consultancy dedicated to advancing R&D in AI safety

George Gor @ggomondi

874 Followers 2K Following Analyst @thefuturesoc. Fundani, sebenzani, nizimele✨

Johnny V. Neumann @JancsiNeumann

72 Followers 546 Following only close friends can call me John. for professional inquiries contact: @witten271

Claris Consult @clarisaiconsult

2K Followers 5K Following Claris Consult: Tailored, ethical AI solutions for business growth, innovation, and success, prioritizing accessibility and real-world impact.

Mikhail Samin @Mihonarium

2K Followers 393 Following Future general AI systems are a threat to everyone and should be regulated ⏹️. Previously, founded https://t.co/w7GPwsBCjo and printed 21k copies of HPMOR

rufoguerreschi @rufoguerreschi

675 Followers 1K Following Fostering a global, democratic, federal, timely and expert governance of AI, to turn it into humanity’s greatest invention.

Tara Steele ⏸️ @tarasteele22

60 Followers 132 Following ‘…there’s 10% chance AI will wipe out humanity in the next 20 years’, Geoffrey Hinton, ‘Godfather of AI’. Perhaps stopping for a bit would be sensible?!🤷🏼♀️

Steve Moraco @SteveMoraco

12K Followers 7K Following born on earth. move fast and make things. here on twitter to generate more high-quality tokens to use for training data. wikipedia & window seat addict ᯅ

Simon Wisdom @simonwisdom

30 Followers 52 Following

Coleman Snell @SnellColeman

82 Followers 162 Following “Wow lovely {AGI} we’re having” Cornell University | Research on Societal Collapse, emerging technology, and global catastrophic risk | Science Communicator.

Campaign to Ban Deepf.. @BanDeepfakes

15 Followers 27 Following A coalition aimed at banning non-consensual AI-generated voices, images and videos at every stage of production and distribution.

Michael Cohen @Michael05156007

1K Followers 144 Following I do AGI Safety research. https://t.co/CBsX51tA39. Once I was swiss chard for Halloween. Once Bill Clinton elbowed me in the face.

Mikhail Samin @Mihonarium

2K Followers 393 Following Future general AI systems are a threat to everyone and should be regulated ⏹️. Previously, founded https://t.co/w7GPwsBCjo and printed 21k copies of HPMOR

AI Safety Institute @AISafetyInst

532 Followers 29 Following We’re building a team of world leading talent to tackle some of the biggest challenges in AI safety - come and join us.

Edouard Harris @harris_edouard

5K Followers 2K Following Cofounder & CTO @GladstoneAI https://t.co/aGM324ATKG

International Dialogu.. @ais_dialogues

151 Followers 0 Following

Kirsty Innes @kmei_

2K Followers 4K Following Director, Tech Policy @LabourTogether. Previously @InstituteGC, @HMTreasury, @UKinFrance. She/her. Views my own, obvs

ai_in_check @ai_in_check

733 Followers 347 Following

Grace Kind @kindgracekind

2K Followers 2K Following AI navel-gazer / Ideonomy evangelist / navigator of uncertain waters

Spencer Greenberg �.. @SpencrGreenberg

19K Followers 6K Following A mathematician/entrepreneur in social science. Tweets about psychology, society, rationality, tech, science, and philosophy. Founder of https://t.co/2YGraOwo77

Garrison Lovely @GarrisonLovely

4K Followers 3K Following Freelance journalist + podcast host. Covers: @jacobin, @thenation Bylines: @bbc_future, @voxdotcom, @nysfocus & @curaffairs.

Raiany Romanni @RaianyRomanni

1K Followers 799 Following Writer, humanist, bioethicist. 🪷 | + Life 🧬

Geoffrey Miller @primalpoly

140K Followers 7K Following Psych professor; wrote The Mating Mind, Spent, Mate, Virtue Signaling. Themes: Evolution, sentience, civilization, EA, X risk, crypto. Wife: @sentientist. ⏸️

Dave Kasten @David_Kasten

777 Followers 3K Following Do what seems cool next. Formerly: McKinsey, VaccinateCA, Activision Blizzard.

Aidan O’Gara @aidanogara_

327 Followers 1K Following Research and writing at https://t.co/B3xADRCjCd

Samuel Hughes @SCP_Hughes

29K Followers 203 Following Research Fellow, @UniofOxford | Head of Housing, @CPSThinkTank | Fellow, @createstreets | Interested in architecture & urbanism | Views my own

Sarah Owen MP @SarahOwen_

27K Followers 8K Following Labour MP for Luton North |🇬🇧🇲🇾| Promoted by and on behalf of Sarah Owen at 3 Union St, LU1 3AN

David Lawrence @dc_lawrence

6K Followers 4K Following Trying to build good things faster with @ukdayone and stop bad things with @ai_ctrl. Previously @ChathamHouse @UKParliament @JesusOxford

Dr. Roman Yampolskiy @romanyam

116K Followers 374 Following Professor of Computer Science. AI Safety & Security Researcher. AI Influencer. My opinions are now yours! I do NOT #followback

Tim Fist @fiiiiiist

742 Followers 424 Following Senior Tech Fellow @IFP. Senior Adjunct Fellow @CNASdc. AI & compute policy, science, innovation.

AI Impacts @AIImpacts

2K Followers 127 Following AI Impacts aims to improve our understanding of the likely impacts of human-level artificial intelligence.

Rosanna Lockwood @Roolockwood

10K Followers 3K Following Journalist and news anchor. English accent, international outlook (not a spy): https://t.co/exdlAn2UZv || Senior Fellow @JSchofieldTrust

Anka Reuel @AnkaReuel

1K Followers 972 Following Computer Science Ph.D. Student @ Stanford | Responsible AI Research Lead @ Stanford AI Index | Responsible AI | AI Governance | Views are my own

Matthijs Maas @matthijsMmaas

2K Followers 3K Following Senior Research Fellow at @law_ai_ | Research Affiliate (AI Governance) @CSERCambridge and @LeverhulmeCFI

Rory Greig @rorygreig1

654 Followers 4K Following Research Engineer at Google DeepMind, interested in AI Alignment and Complexity Science.

Alexandre Variengien @A_Variengien

165 Followers 118 Following Independent researcher - Trying to leverage scientific knowledge to inform AI policy development. Previously at Redwood Research & Conjecture.

Sam Ashworth-Hayes @SAshworthHayes

13K Followers 1K Following Assistant Comment (op ed) Editor @telegraph. Opinions my own and should be yours too.

nostalgebraist @nostalgebraist

2K Followers 399 Following

Jamie Bernardi @The_JBernardi

915 Followers 655 Following Doing AI Governance research, ex-Co-Founder, Bluedot Impact. Climber, guitarist and sporadic musician. he/him.

Roko @RokoMijic

10K Followers 214 Following Radical Centrist, Transhumanist We wanted eternal life, instead we got 140 new genders. Join me at the link below for decentralized AI alignment:

Jonas Vollmer @Jonas_Vollmer

2K Followers 1K Following Co-founded @AtlasFellow. Previously ED at EA Funds and @LongTermRisk.

Dagan Shani @daganshani1

828 Followers 742 Following Video director and editor. Lately I lose a lot of sleep, because of AI. https://t.co/wF8eV3iqly https://t.co/zjfAXIWwH6

Kersti Kaljulaid @KerstiKaljulaid

61K Followers 409 Following - President of Estonia | 2016-2021 | Eesti Vabariigi president -

Wei Dai @weidai11

7K Followers 82 Following wrote Crypto++, b-money, UDT. thinking about existential safety and metaphilosophy. blogging at https://t.co/mBVFhriJVf

@mrgunn ⏸️ @mrgunn

7K Followers 965 Following Comms consultant for AI safety & governance. Talk to me: https://t.co/djOzH9Pbui Ex: Head of Comms @Quora @mendeley_com @ElsevierConnect

Sergey Karayev @sergeykarayev

11K Followers 3K Following

Luca Bertuzzi @BertuzLuca

13K Followers 4K Following Technology journalist specialised in digital policy & European affairs. Ex @EURACTIV. Bylines @PrivacyPros, @repubblica, @tagesspiegel. DMs open.

James Fickel 🪷 @jamesfickel

2K Followers 374 Following

Kanjun 🐙🏡 @kanjun

17K Followers 487 Following understanding human & machine minds to build a creative abundant future. CEO @imbue_ai. support founders @outsetcap. co-organize https://t.co/H1aXYk96ja.

bioshok(INFJ) @bioshok3

19K Followers 2K Following AGI/AI Alignment/Existential risk/X-risk/Super Intelligence/Singularity/技術トレンド https://t.co/2vsogSTe3X(MBTIの哲学) ルッキズムに祈るセカイ系 INFJ/5w4/ILI ※AI研究者ではありません

Kevin Esvelt @kesvelt

9K Followers 23 Following Sculpting evolution & safeguarding biotechnology, MIT Media Lab.

James Baker @JamesBaker__

205 Followers 679 Following AI, tech and national security @harvard_law. Previously lawyer and diplomat @FCDOGovUK

David Wood - h/acc, u.. @dw2

9K Followers 5K Following Chair, London Futurists. Executive Director of LEV Foundation. Author or Lead Editor of 12 books about the future. PDA/smartphone pioneer. Symbian co-founder

Gary Marcus @GaryMarcus

145K Followers 7K Following “A beacon of clarity”. Spoke at US Senate AI Oversight committee. Founder/CEO Geometric Intelligence (acq. by Uber). Rebooting AI & Taming Silicon Valley.@lulumeservey The first time I was on CNBC, I asked the advice of a friend who is a director in Hollywood and he said—"There will be monitors to the left and right of you that display what the TV audience is seeing. DON'T try to sneak a look because it'll give you shifty eyes."

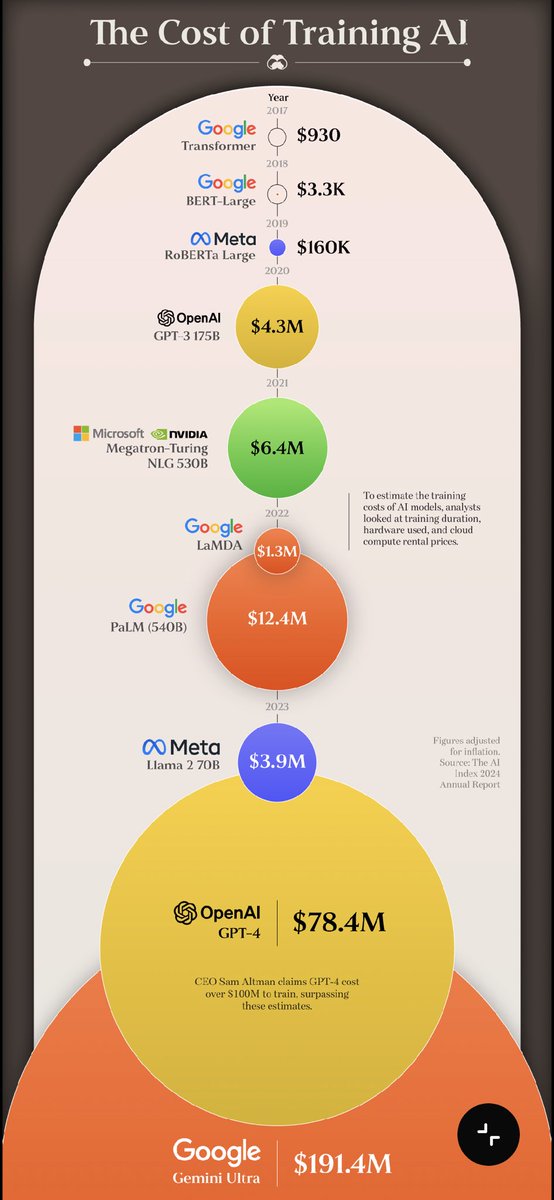

If you’d like to learn more, we’ll discuss training costs in greater depth in an upcoming report, in collaboration with @StanfordHAI.

There’s been a lot of interest in Epoch’s training cost estimates, featured in Stanford’s 2024 AI Index Report. Our estimates show the skyrocketing costs of training large AI models. Want to learn more about how we calculated these costs? 🧵 x.com/chiefaioffice/…

AI training cost estimates from the Stanford 2024 AI Index Report: Original transformer model - $930 GPT-3 - $4.3M GPT-4 - $78.4M Gemini Ultra - $191.4M

Lord Clement-Jones argued for supply chain regulation on deepfakes, and recognised our deepfake campaign at last night's Lords Committee Debate. "I also pay tribute to ControlAI, who are vigorously campaigning on this subject in terms of the supply chain for the creation of…

Available on the 80,000 Hours Podcast anywhere you listen to podcasts of course. Video is also on YouTube: youtube.com/watch?v=qOr734… And a transcript and lots of resources to learn more here: 80000hours.org/podcast/episod…

Sleeper agents + the biggest AI updates since ChatGPT | Zvi Mowshowitz (@TheZvi) • The big thing everyone missed in the sleeper agents paper • Where he disagrees with me • Which company has the best safety plan • 'Pause AI' • More

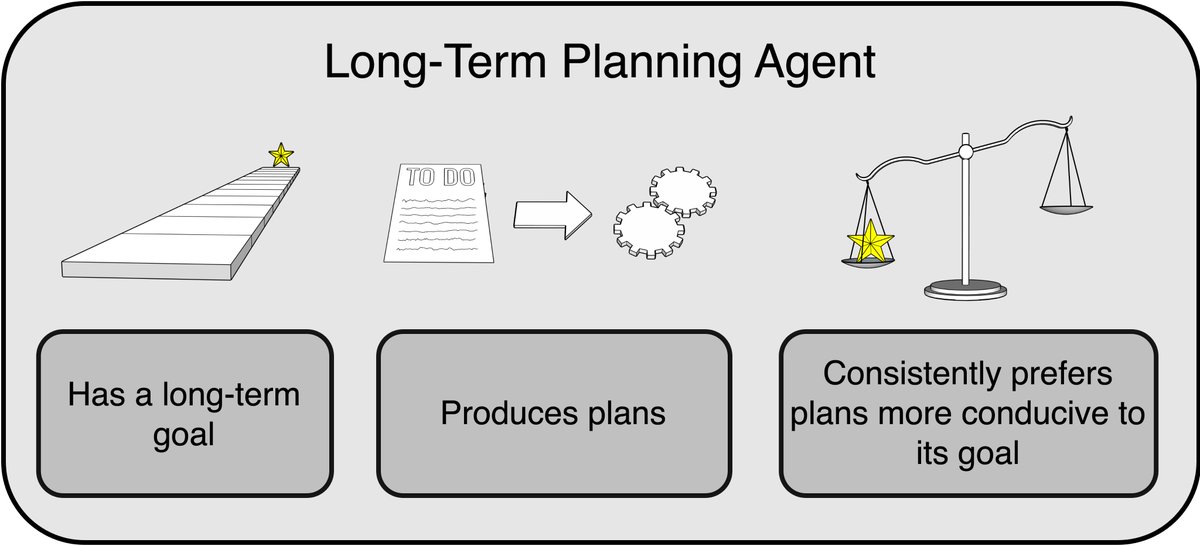

Governments need to stop people from building dangerously capable long-term planning agents, because we wouldn't be able to verify with empirical tests that the agents were safe.

We could easily have a situation where advanced AI models "Volkswagen" themselves; they behave well when they're being watched closely and badly when they're not. But unlike in the famous Volkswagen case, this could happen without the owner of the AI model being aware.

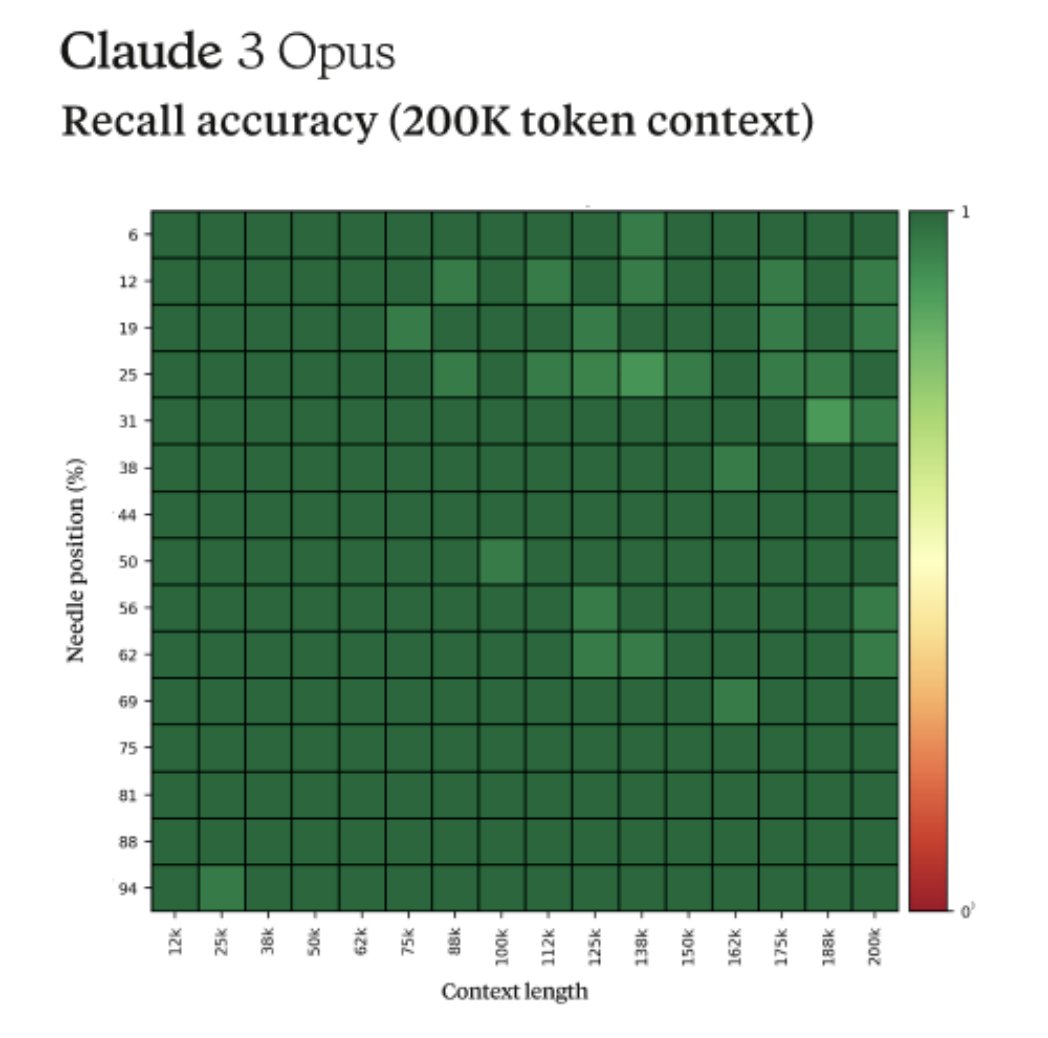

Claude has also demonstrated it can guess when it is being tested. (That's not to say that in this case, it used that information to decide to pause any misbehavior). x.com/alexalbert__/s…

Fun story from our internal testing on Claude 3 Opus. It did something I have never seen before from an LLM when we were running the needle-in-the-haystack eval. For background, this tests a model’s recall ability by inserting a target sentence (the "needle") into a corpus of…

Importantly, for very advanced AI agents acting in complex environments like the real world, we can't count on being able to hide from them the fact that they're being tested. In fact, Lehman, et al. (2020) found an example of agents pausing their misbehavior during testing.

For example, suppose a leader was looking for a general, but worried the general might stage a coup. If the leader tries to test this, the candidate could recognize the test and behave agreeably, or they could execute a coup during the test. And you can't come back from that.

Would the AI agent exploit an opportunity to thwart our control over it? Well, does the agent have such an opportunity during the test? If yes, that's like testing for poison by eating it. If no, its behavior doesn't answer our question. So sometimes there's just no safe test.

Well, we shouldn't allow such AI systems to be made! They haven't been made yet. A key problem with sufficiently capable long-term planning agents is that safety tests are likely to be either unsafe or uninformative. Suppose we want to answer the question:

This is with my excellent co-authors Noam Kolt, Yoshua Bengio, Gillian Hadfield, and Stuart Russell. See the paper for more discussion on the particular dangers of long-term planning agents. What should governments do about this? science.org/doi/10.1126/sc…

Recent research justifies a concern that AI could escape our control and cause human extinction. Very advanced long-term planning agents, if they're ever made, are a particularly concerning kind of future AI. Our paper on what governments should do just came out in Science.🧵

the right time to overuse the word ai is when you are speaking with investors not consumers