techmaraudersmap @techmaruadermap

I write about #AI #MLjobs #softwareengineering #aiengineering #softskills. Joined April 2024-

Tweets25

-

Followers5

-

Following85

-

Likes16

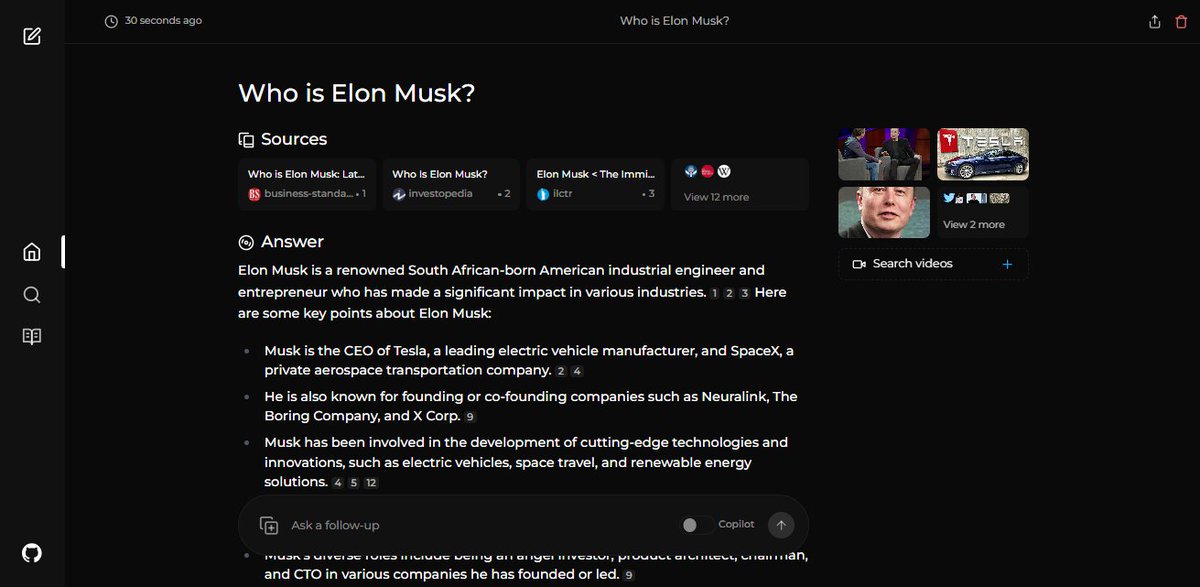

🚀 Perplexica - An AI-powered search engine 🔎 Perplexica is an open-source AI-powered searching tool or an AI-powered search engine that goes deep into the internet to find answers. Built with LangChain, this is a great resource for getting started! github.com/ItzCrazyKns/Pe…

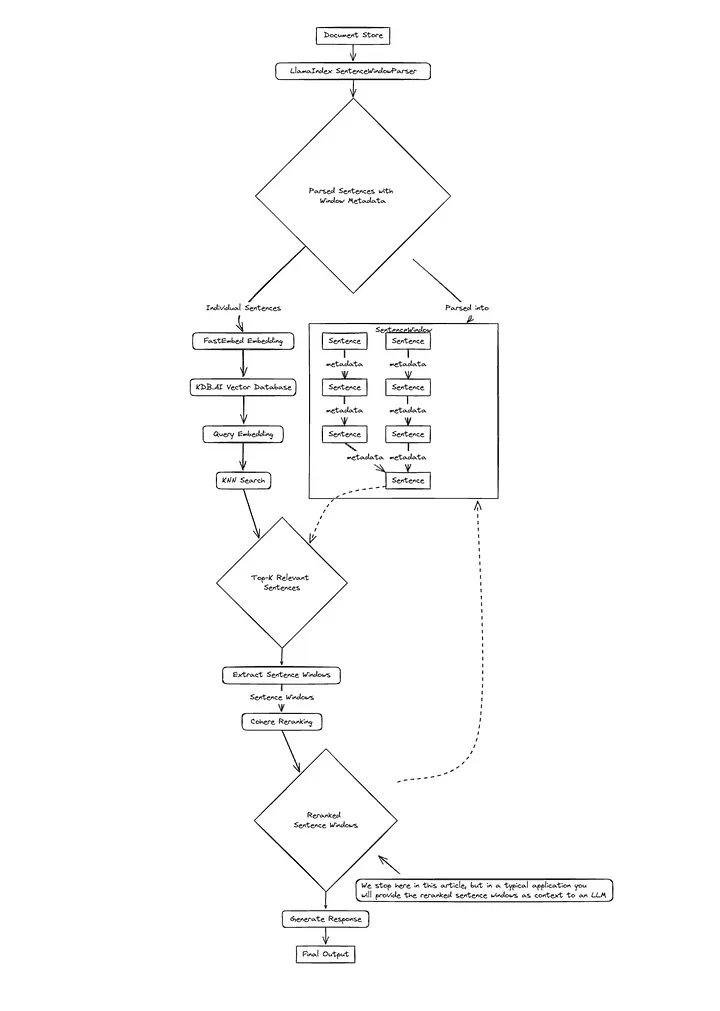

A "generally good" advanced RAG pipeline to start with 👇 1. Embed on smaller chunks: Current embedding models work best when matching on granular facts, like sentences or smaller paragraphs. If you clutter each chunk with too much text, relevant chunks are less likely to be…

A "generally good" advanced RAG pipeline to start with 👇 1. Embed on smaller chunks: Current embedding models work best when matching on granular facts, like sentences or smaller paragraphs. If you clutter each chunk with too much text, relevant chunks are less likely to be… https://t.co/HmipmoKZAX

Before you build complex agent systems, I’d recommend building with the individual “agent ingredients” first to gain a better first principles understanding of how they work. Here are the main ingredients for building an agent (mini 🧵) Query Planning: Given the task +…

It's been a week since LLaMA 3 dropped. In that time, we've: - extended context from 8K -> 128K - trained multiple ridiculously performant fine-tunes - got inference working at 800+ tokens/second If Meta keeps releasing OSS models, closed providers won't be able to compete.

I am #hiring senior engineering firepower for my team @Rippling in #Bengaluru to disrupt a 5 trillion usd/year benefits and insurance space. HMU or @mister_whistler for deets! RT for good karma!

How faithful are LLMs in RAG applications? 🤔 A recent paper from @Stanford explores how well LLMs respect retrieved information or fallback to their internal knowledge. The goal is to quantify the tension between LLMs’ internal knowledge and the retrieved information…

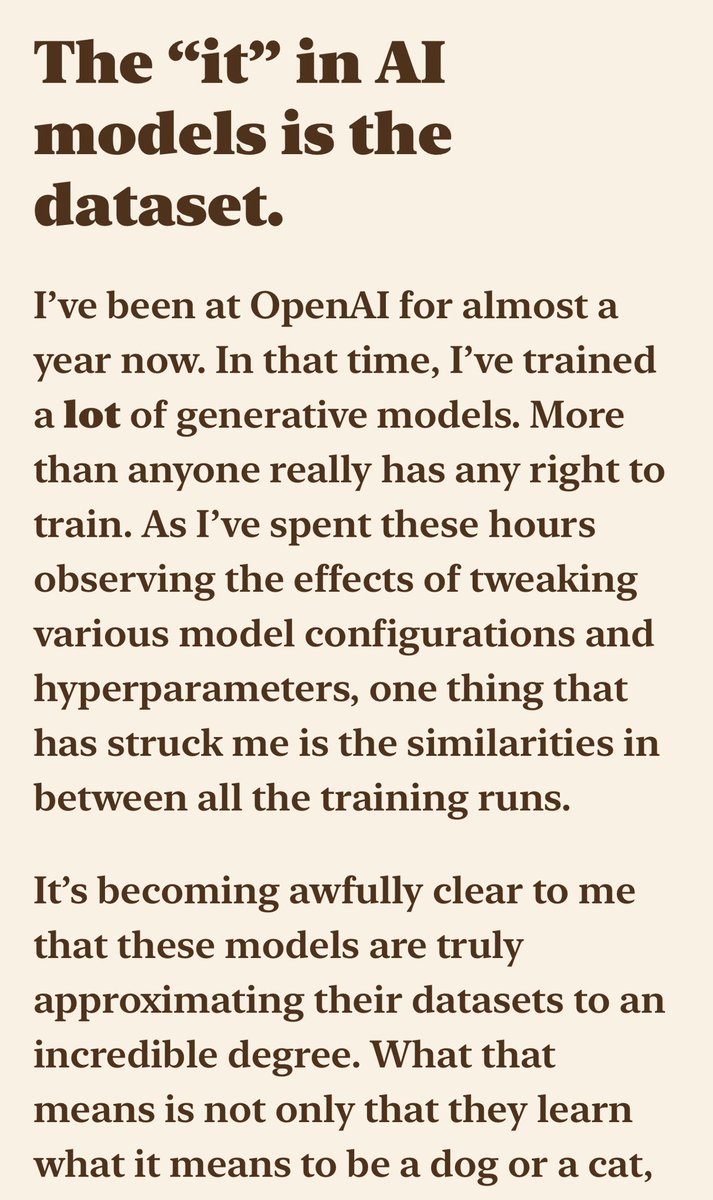

Data is all you need?

“I’ve been at OpenAI for almost a year now. In that time, I’ve trained a lot of generative models. The “it” in AI models is the dataset” Thank you we need more people to stand up and admit this. I have been trying for decades. But now it is making sense. nonint.com/2023/06/10/the…

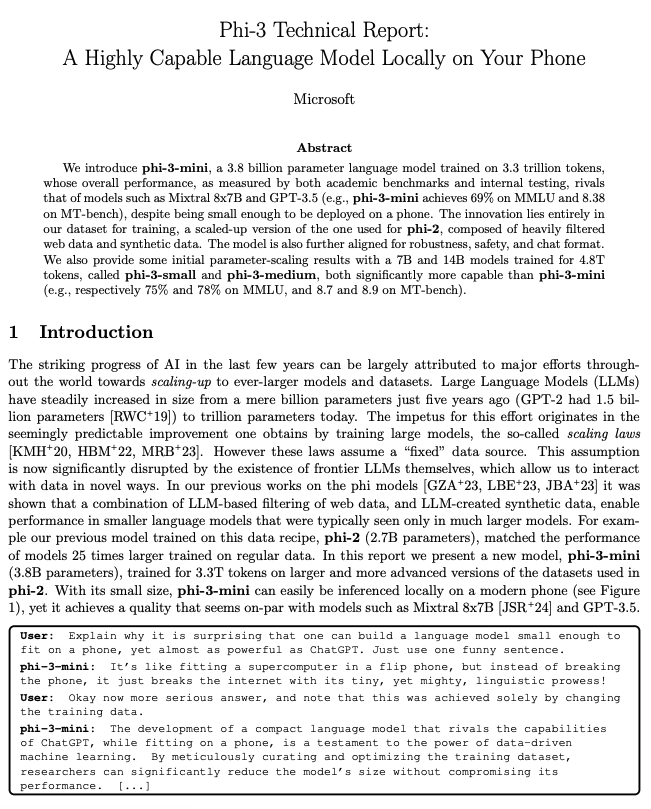

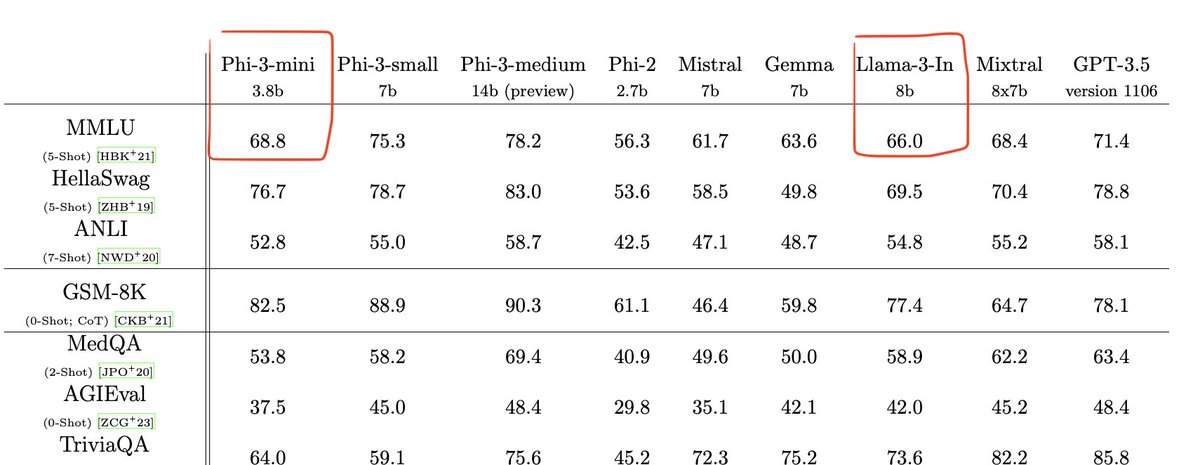

Phi-3 can run on a phone, and is very competitive with gpt-3.5!

Here are 2 awesome resources to help you build RAG apps using @LangChainAI 👉Deeplearning AI course on Chat with Your Data learn.deeplearning.ai/courses/langch… 👉RAG From Scratch playlist by Langchain youtube.com/playlist?list=…

If you want to build your own RAG application, @akshay_pachaar's thread tells you how to get started 👇

If you want to build your own RAG application, @akshay_pachaar's thread tells you how to get started 👇

Let's build a "Chat with your docs" RAG application, step-by-step:

🅿️ Phi-3 is now available on Hugging Face 3.8B parameter model in two versions: 4K and 128K context length. Excellent performance + MIT license, enjoy! 🥳 🤗 4k: huggingface.co/microsoft/Phi-… 🤗 128k: huggingface.co/microsoft/Phi-…

Apple is joining the public AI game with 4 new models on the Hugging Face hub! huggingface.co/collections/ap…

The Cohere Toolkit is here! We’re releasing an open-source repository for devs to build RAG applications, just like the Cohere demo. It's a game-changer for developers to accelerate and simplify building enterprise AI apps like knowledge assistants. cohere.com/blog/cohere-to…

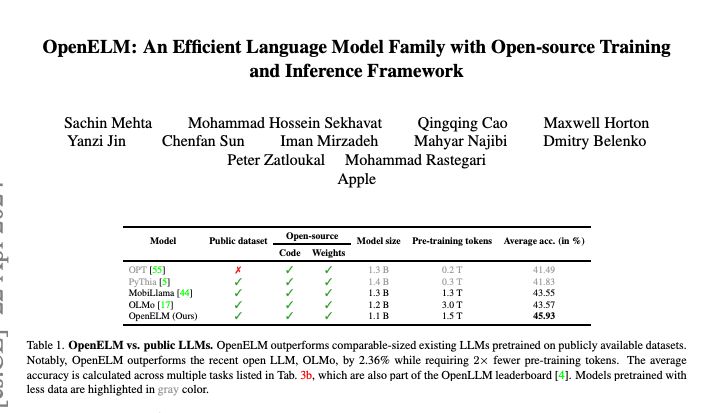

Apple's OpenELM model out 👉layer-wise scaling strategy used to efficiently allocate parameters within each layer of the transformer model, enhancing accuracy. 👉pretrained and instruction tuned models with 270M, 450M, 1.1B, and 3B parameters are released #apple #llm #ai

A lot of the insider knowledge on how to build an LLM has gone underground in the last 24 months. We are going to build #SnowflakeArctic in the open Model arch ablations, training and inference system performance, dataset and data composition ablations, post-training fun, big…

Phi-3 has "only" been trained on 5x fewer tokens than Llama 3 (3.3 trillion instead of 15 trillion) Phi-3-mini less has "only" 3.8 billion parameters, less than half the size of Llama 3 8B. Despite being small enough to be deployed on a phone (according to the technical…

Phi-3 has "only" been trained on 5x fewer tokens than Llama 3 (3.3 trillion instead of 15 trillion) Phi-3-mini less has "only" 3.8 billion parameters, less than half the size of Llama 3 8B. Despite being small enough to be deployed on a phone (according to the technical…

What is the best embedding model for your RAG app? @helloiamleonie writes a thread to get you started 👇

What is the best embedding model for your RAG app? @helloiamleonie writes a thread to get you started 👇

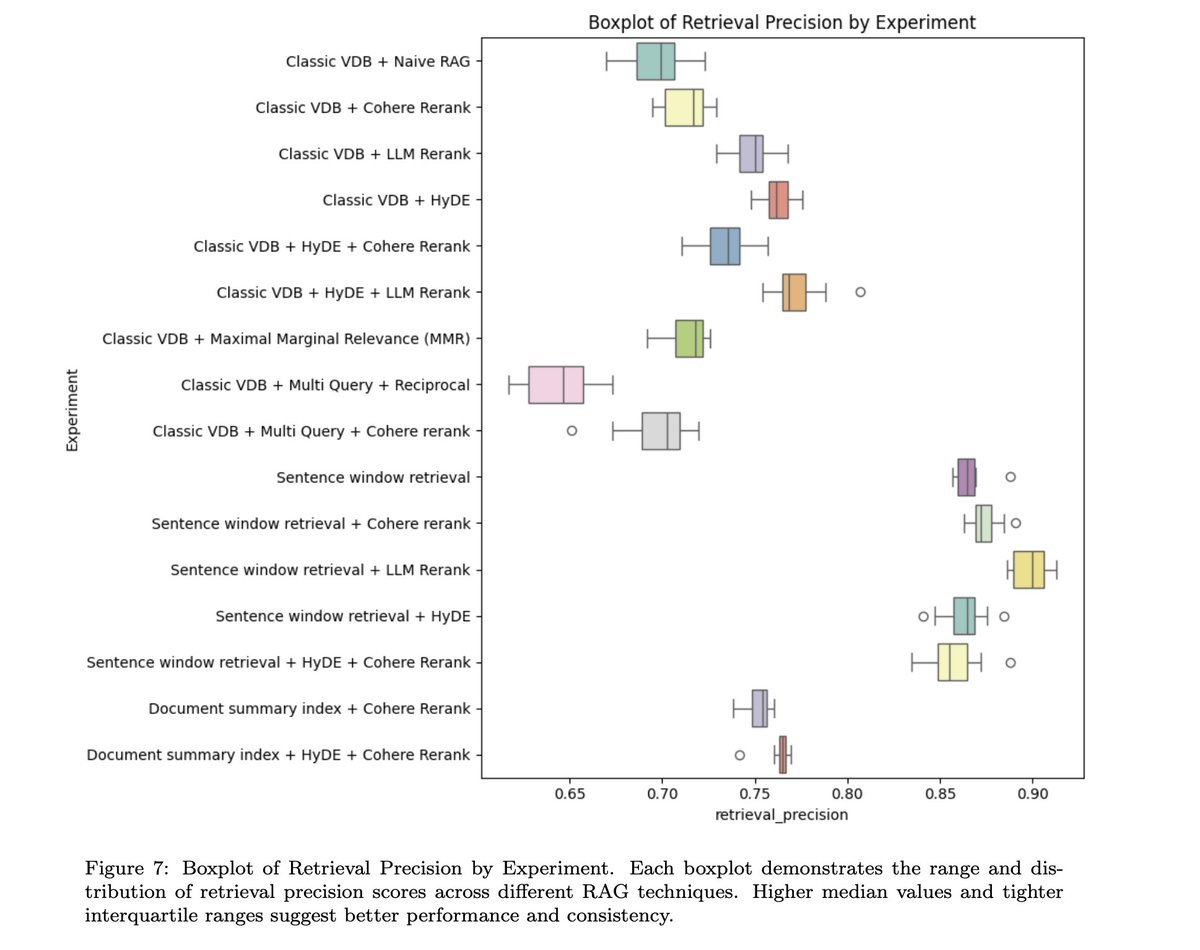

With new tutorials on RAG coming up everyday, it makes you wonder what really works and what does not work. Read this comprehensive evaluation survey ARAGOG arxiv.org/pdf/2404.01037… 👇

With new tutorials on RAG coming up everyday, it makes you wonder what really works and what does not work. Read this comprehensive evaluation survey ARAGOG arxiv.org/pdf/2404.01037… 👇

Wheau @WheausdI5p

2 Followers 132 Following

มาริใน @l6NOQqjY4Sr7G4

74 Followers 1K Following ความเซ็กซี่มีมากกว่าหนึ่งด้าน ติดตามฉันและค้นพบช่วงเวลาอื่นๆ ที่จะทำให้หัวใจคุณเต้นเร็วขึ้น! หน้าแรกของข้อมูลการติดต่อจะได้รับการอัปเดตตลอดเวลา

BettyGalbraith @1bU81tHdEpLFnh0

4 Followers 301 Following

Ayush @localmrbean

35 Followers 449 Following Here to retweet. Interested in distributed systems and cloud computing.

Alyssa O'Loghlen @AlyssaLogh26363

1 Followers 98 Following Fan Page for the goddess @AnastiyaRub 🤫 her linktree👇🏼

Groq Inc @GroqInc

47K Followers 472 Following Creator of the LPU™ Inference Engine, providing the fastest speed for AI applications, designed & engineered in N. America https://t.co/DsEqVAC5Dp

Wing Lian (caseus) @winglian

9K Followers 2K Following @axolotl_ai OSS maintainer. Axolotl AI founder. AI/ML tinkerer. Building tools for everyone. ☕ https://t.co/3ni1V4rI9w

Alyssa O'Loghlen @AlyssaLogh26363

1 Followers 98 Following Fan Page for the goddess @AnastiyaRub 🤫 her linktree👇🏼

Ayush @localmrbean

35 Followers 449 Following Here to retweet. Interested in distributed systems and cloud computing.

LM Studio @LMStudioAI

16K Followers 189 Following Download & run local/open LLMs on your computer 👾 App: https://t.co/YS5uiRQ7TI (Mac/Windows/Linux)

Susan Zhang @suchenzang

20K Followers 506 Following @ Google Deepmind. Past: @MetaAI, @OpenAI, @unitygames, @losalamosnatlab, @Princeton etc. Always hungry for compute.

Riddhi Dutta @rite2riddhi

16K Followers 109 Following Software Engineer III @Google , ex Amazon , Salesforce | Teacher | Youtuber | Talks about Tech , Travel & Cricket.

Dhravya Shah @DhravyaShah

9K Followers 1K Following 18. techno optimist, passionate dev who ships (a lot) Building a better internet @Cloudflare. Building a second brain for your life https://t.co/fVMMAL23RR

Yair Schiff @SchiffYair

167 Followers 122 Following

Dan Fu @realDanFu

4K Followers 176 Following CS PhD Candidate at Stanford, systems for machine learning. Sometimes YouTuber/podcaster. Academic Partner, @togethercompute.

Songlin Yang @SonglinYang4

2K Followers 2K Following PhD student @MIT_CSAIL. Prev. @ShanghaiTechUni @SUSTechSZ. Working on scalable and principled methods in #ML & #NLProc. INTP | 5w4 | sx/sp | she/her

Simran Arora @simran_s_arora

2K Followers 212 Following CS PhD student at @StanfordAILab @hazyresearch

Dust @dust4ai

7K Followers 41 Following Amplify your team's potential with customizable and secure AI assistants.

Jim Fan @DrJimFan

230K Followers 3K Following @NVIDIA Sr. Research Manager & Lead of Embodied AI (GEAR Lab). Creating foundation models for Humanoid Robots & Gaming. @Stanford Ph.D. @OpenAI's first intern.

Reka @RekaAILabs

11K Followers 13 Following An AI research and product company 🫠. We are a team of scientists and engineers building state-of-the-art multimodal language models 😻

Kshitij Grover @kshithappens

935 Followers 1K Following CTO @useorb. We built billing so you don't have to. prev infra eng @asana, cs/philosophy @caltech. fan of roger federer, coffee, dfw, and phil.

Grace Ge @gracewenge

676 Followers 405 Following “all is fair in love & tech”. partner @amplifypartners

Saksham Consul @SakshamConsul

87 Followers 313 Following ML Sys Engineer @LaminiAI | Ex-Roboticist | Prev MS @Stanford, ML @IkigaiInc, @Nvidia | Builder

Lamini @LaminiAI

5K Followers 9 Following The enterprise LLM Platform that you can own. Run and train custom open models today.

Sharon Zhou @realSharonZhou

23K Followers 1 Following Building the future of LLMs | Cofounder & CEO, @LaminiAI | Prev: CS Faculty & PhD @Stanford. Product @Google. @Harvard | @MIT 35 under 35. Angel investor.

Thomas Wolf @Thom_Wolf

68K Followers 4K Following Co-founder and CSO @HuggingFace - open-source and open-science

Sebastian Hofstätter @s_hofstaetter

1K Followers 254 Following RAG & tool use modelling co-lead @Cohere; PhD in efficient neural information retrieval from @tu_wien

Pedro Cuenca @pcuenq

5K Followers 769 Following ML Engineer at 🤗 Hugging Face | Co-founder at LateNiteSoft (Camera+). I love AI and photography.

Bill Chambers @bllchmbrs

1K Followers 812 Following 👷 https://t.co/ODHNO6YBx7 ✍️ https://t.co/cX04twkyJ5 1x indie exit 1x O'Reilly author Prev: 🚀s ➡️ Anyscale, Databricks, $PCOR Talks about Startups, Data, AI

Andrew Gao @itsandrewgao

27K Followers 2K Following techno optimist! currently: @nomic_ai @stanford; prev @LangChainAI; Z Fellow 🇺🇸

Rohan Paul @rohanpaul_ai

13K Followers 1K Following ML Engineer (e/acc) 📌 https://t.co/x0IIWfnOt8 🚀 https://t.co/QEO4CKRl1b Open LLMs is Happiness 💡 Ex Deutsche & HSBC. DM for collaboration.

Leonie @helloiamleonie

7K Followers 410 Following 🖋️ Technical writer https://t.co/mXXEyoAUJZ 🥑 Developer Advocate @weaviate_io 🦆 Kaggle Notebooks Grandmaster https://t.co/3wrvdColIa

Soumith Chintala @soumithchintala

187K Followers 887 Following Cofounded and lead @PyTorch at Meta. Also dabble in robotics at NYU. AI is delicious when it is accessible and open-source.

François Chollet @fchollet

470K Followers 770 Following Deep learning @google. Creator of Keras. Author of 'Deep Learning with Python'. Opinions are my own.

Jyoti Aneja @JyotiAneja

566 Followers 619 Following Senior Researcher @MSFTResearch | PhD from the University of Illinois, Urbana-Champaign | working on Phi models, Physics of LLMs👩🏻💻

Shubham Saboo @Saboo_Shubham_

41K Followers 448 Following AI Products @tenstorrent 📕 Author of books on GPT-3 & Neural Search in Prod ✍️ Tweets about LLMs & Prompt Engineering 📩 DMs open for collab

Ethan Mollick @emollick

212K Followers 554 Following Professor @Wharton studying AI, innovation & startups. Democratizing education using tech Book: https://t.co/CSmipbJ2jV Substack: https://t.co/UIBhxu4bgq

Yann LeCun @ylecun

713K Followers 718 Following Professor at NYU. Chief AI Scientist at Meta. Researcher in AI, Machine Learning, Robotics, etc. ACM Turing Award Laureate.

Mustafa Suleyman @mustafasuleyman

131K Followers 536 Following CEO, Microsoft AI | Author: The Coming Wave | Past: Co-founder, @InflectionAI & @GoogleDeepMind

Andrew Ng @AndrewYNg

1.0M Followers 913 Following Co-Founder of Coursera; Stanford CS adjunct faculty. Former head of Baidu AI Group/Google Brain. #ai #machinelearning, #deeplearning #MOOCs

Nicolas Patry @narsilou

2K Followers 185 Following ML Engineer @huggingface. Anything ML inference related. Maintaining `pipeline` in `transformers`. Loving Rust.

Philipp Schmid @_philschmid

16K Followers 655 Following Tech Lead and LLMs at @huggingface 👨🏻💻 🤗 AWS ML Hero 🦸🏻 | Cloud & ML enthusiast | 📍Nuremberg | 🇩🇪 https://t.co/l1ppq3q3hk

abhishek @abhi1thakur

81K Followers 664 Following 🤗 I build AutoTrain @huggingface 👨🏽💻 World's First 4x Grand Master @kaggle 🎥 YouTube 100k+: https://t.co/BHnem8fTu5 ⭐ GitHub Star

Grant Slatton @GrantSlatton

6K Followers 529 Following Formerly built the fastest filesystem in the world at AWS, now building the fastest spreadsheet at https://t.co/ojwoGUGC8P

Python Developer @Python_Dv

80K Followers 1K Following A place for all things related to the #python #programming #coding #webdeveloper #webdevelopment #pythonprogramming #ai #ml #machinelearning #datascience ...

Hugging Face @huggingface

347K Followers 189 Following The AI community building the future. https://t.co/VkRPD0VKaZ #BlackLivesMatter #stopasianhate

Omar Khattab @lateinteraction

11K Followers 2K Following CS PhD candidate @StanfordNLP. 2022 Apple Scholar in AI/ML. Author of ColBERT (https://t.co/2ZtgXoa1np), DSPy (https://t.co/BH7WmMKDXR), & various retrieval & LM systems.💬"LangChain Tool Calling feature just changed everything" We recently added a common interface for tool calling across model providers This makes it easy to build agents that work across models Watch @EdenEmarco177 explain why this is a big deal youtube.com/watch?v=dj8Yqi…

🚀 Perplexica - An AI-powered search engine 🔎 Perplexica is an open-source AI-powered searching tool or an AI-powered search engine that goes deep into the internet to find answers. Built with LangChain, this is a great resource for getting started! github.com/ItzCrazyKns/Pe…

It's been a week since LLaMA 3 dropped. In that time, we've: - extended context from 8K -> 128K - trained multiple ridiculously performant fine-tunes - got inference working at 800+ tokens/second If Meta keeps releasing OSS models, closed providers won't be able to compete.

There are many ways a very large and powerful model can be useful, even if no one can run it locally today: Distillation -- think about all recent results people show distilling GPT-4 outputs and training smaller models on those, how much more can be done if the teacher model…

I really love Meta’s open-source focus, but I doubt many of us will leverage such big models. None of us will run Llama3 400B locally 😅 Using APIs stays the way most of us will interact and work with LLMs. But Llama-3 8B or even 70B is quite cool, haha! Still, open sourcing…

I am #hiring senior engineering firepower for my team @Rippling in #Bengaluru to disrupt a 5 trillion usd/year benefits and insurance space. HMU or @mister_whistler for deets! RT for good karma!

How faithful are LLMs in RAG applications? 🤔 A recent paper from @Stanford explores how well LLMs respect retrieved information or fallback to their internal knowledge. The goal is to quantify the tension between LLMs’ internal knowledge and the retrieved information…

Phi-3 can run on a phone, and is very competitive with gpt-3.5!

Phi-3 Technical Report Microsoft presents a new 3.8B parameter language model called phi-3-mini. It's trained on 3.3 trillion tokens and is reported to rival Mixtral 8x7B and GPT-3.5. Has a default context length of 4K but also includes a version that is extended to 128K…

“I’ve been at OpenAI for almost a year now. In that time, I’ve trained a lot of generative models. The “it” in AI models is the dataset” Thank you we need more people to stand up and admit this. I have been trying for decades. But now it is making sense. nonint.com/2023/06/10/the…

BREAKING: Eric Schmidt-backed Augment, a GitHub Copilot rival, launches out of stealth with $252M in funding at a $977M valuation

There is a really nice community of researchers developing transformer alternatives. Want to highlight these impressive folks. Simran Arora (@simran_s_arora), Chunting Zhou (@violet_zct), Dan Fu (@realDanFu), and Songlin Yang (@SonglinYang4)

Apple presents OpenELM An Efficient Language Model Family with Open-source Training and Inference Framework The reproducibility and transparency of large language models are crucial for advancing open research, ensuring the trustworthiness of results, and

🅿️ Phi-3 is now available on Hugging Face 3.8B parameter model in two versions: 4K and 128K context length. Excellent performance + MIT license, enjoy! 🥳 🤗 4k: huggingface.co/microsoft/Phi-… 🤗 128k: huggingface.co/microsoft/Phi-…

The Cohere Toolkit is here! We’re releasing an open-source repository for devs to build RAG applications, just like the Cohere demo. It's a game-changer for developers to accelerate and simplify building enterprise AI apps like knowledge assistants. cohere.com/blog/cohere-to…

Great short thread

Why do people get confused by DSPy, part 1: This confused me when I first got started. Let's dive in.

Phi-3 has "only" been trained on 5x fewer tokens than Llama 3 (3.3 trillion instead of 15 trillion) Phi-3-mini less has "only" 3.8 billion parameters, less than half the size of Llama 3 8B. Despite being small enough to be deployed on a phone (according to the technical…

I can't believe microsoft just dropped phi-3 less than a week after llama 3 arxiv.org/abs/2404.14219. And it looks good!

We're hiring ML research interns this summer for a recursive self-improvement research role Requirements: > Cracked > Believe in personal AGI > Want sama to kneel > Willing to do acid before training runs This is a great way to get some early ML research under your belt.