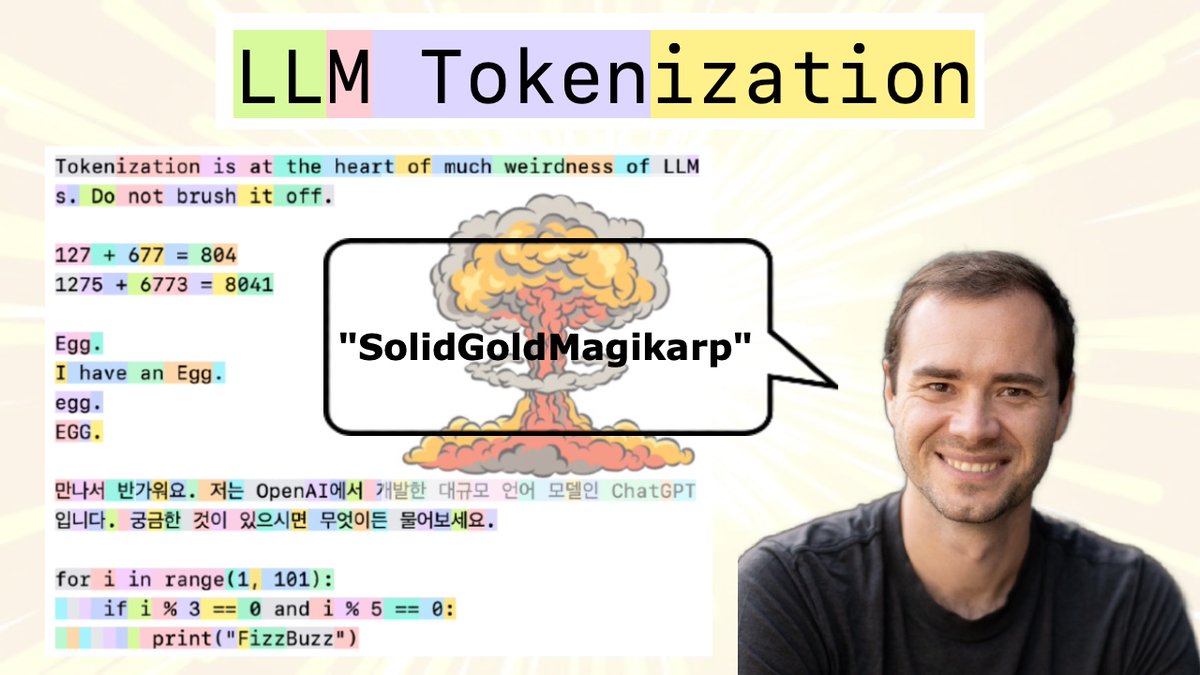

New (2h13m 😅) lecture: "Let's build the GPT Tokenizer" Tokenizers are a completely separate stage of the LLM pipeline: they have their own training set, training algorithm (Byte Pair Encoding), and after training implement two functions: encode() from strings to tokens, and decode() back from tokens to strings. In this lecture we build from scratch the Tokenizer used in the GPT series from OpenAI.

We will see that a lot of weird behaviors and problems of LLMs actually trace back to tokenization. We'll go through a number of these issues, discuss why tokenization is at fault, and why someone out there ideally finds a way to delete this stage entirely.

@karpathy Why do we call this „training“ a tokenizer? My understanding is that there are no parameters that are being learned. Unless we consider the vocab at each time step the parameters.

@karpathy This will land on my "I saw this lectures about LLMs and..."-list of links for sure. Thanks for doing this Andrej. You're an awesome dude.