Joel Miller @joelmiller

AI consultant. Co-founder @ExoBrainAI... exploring AI and reducing the capability versus adoption gap! exobrain.co.uk London Joined March 2008-

Tweets206

-

Followers365

-

Following548

-

Likes42

The ExoBrain newsletter this week looks at mobile/ambient AI, AWS and the competing cloud hyperscalers, and mixed signals from the markets... xobrn.co/54kethnc

The ExoBrain newsletter this week looks at whether AI can resuscitate global healthcare, unpacks next-gen Llama 3, and ‘delves’ into LLM linguistics... exobrain.co.uk/weekly-ai-news…

Introducing Meta Llama 3: the most capable openly available LLM to date. Today we’re releasing 8B & 70B models that deliver on new capabilities such as improved reasoning and set a new state-of-the-art for models of their sizes. Today's release includes the first two Llama 3…

The ExoBrain newsletter this week explores music generation service Udio, the AI adoption versus opportunity gap, and a wave of new model announcements… exobrain.co.uk/weekly-ai-news…

The future of attention just dropped, and it looks a lot like a state space model (finite size, continual updates) Little doubt now that a mixture of architectures will support the long-term, gradually conditioned memory needed for highly capable agents Buckle up!

The future of attention just dropped, and it looks a lot like a state space model (finite size, continual updates) Little doubt now that a mixture of architectures will support the long-term, gradually conditioned memory needed for highly capable agents Buckle up!

New paper out today in @NatMachIntell, where we show robust neural to speech decoding across 48 patients. nature.com/articles/s4225…

Some more details from @EricSteinb about @magiclabs (see why @karpathy @danielgross @natfriedman @polynoamial all invested) - LONG context - more than TENS OF MILLIONS tokens support - Inference time compute idea - Agents with 99.9% accuracy - AGI within a decade thanks…

Some more details from @EricSteinb about @magiclabs (see why @karpathy @danielgross @natfriedman @polynoamial all invested) - LONG context - more than TENS OF MILLIONS tokens support - Inference time compute idea - Agents with 99.9% accuracy - AGI within a decade thanks… https://t.co/N9SucIqXr4

The @ExoBrainAI newsletter this week looks at quantum AI, Google’s disrupted business model, and what ‘AI safety’ really means… exobrain.co.uk/weekly-ai-news…

Even @AnthropicAI are "speechless" in response to some of the things Claude 3 can come up with!

Even @AnthropicAI are "speechless" in response to some of the things Claude 3 can come up with!

Based on some pretty conservative estimates, the raw/efficiency power of Blackwell is essentially going to make it the workhorse for the intelligence revolution...

Based on some pretty conservative estimates, the raw/efficiency power of Blackwell is essentially going to make it the workhorse for the intelligence revolution... https://t.co/2RESfP50yr

I can imagine a future Hollywood where banks of highly trained visual 'mentats' think movies into existence...

I can imagine a future Hollywood where banks of highly trained visual 'mentats' think movies into existence...

Quite a thought... a full @nvidia 'AI Factory' (32k Blackwell GPUs) would probably be able to train GPT-4 in under 3 days. 🫨 Down from the ~100 days it took on A100s. Imagine when multiple companies are churning out several GPT-4 class models a week???

The ExoBrain #ai newsletter this week explores how large language models are increasingly breaking free from their chatbot constraints and accelerating autonomous work, robotics, and community software creation. exobrain.co.uk/weekly-ai-news…

Amazing progress being made on fine tuning models with consumer GPUs... This post includes some great explanations around the key techniques: answer.ai/posts/2024-03-…

8 years ago today, AlphaGo beat Lee Sedol in a milestone for AI. Unlike typical neural nets, AlphaGo spent ~1 minute per move improving its policy via search. This boosted its Elo by more than a 1000x bigger model. Even today, nobody has trained a raw NN that is superhuman in Go.

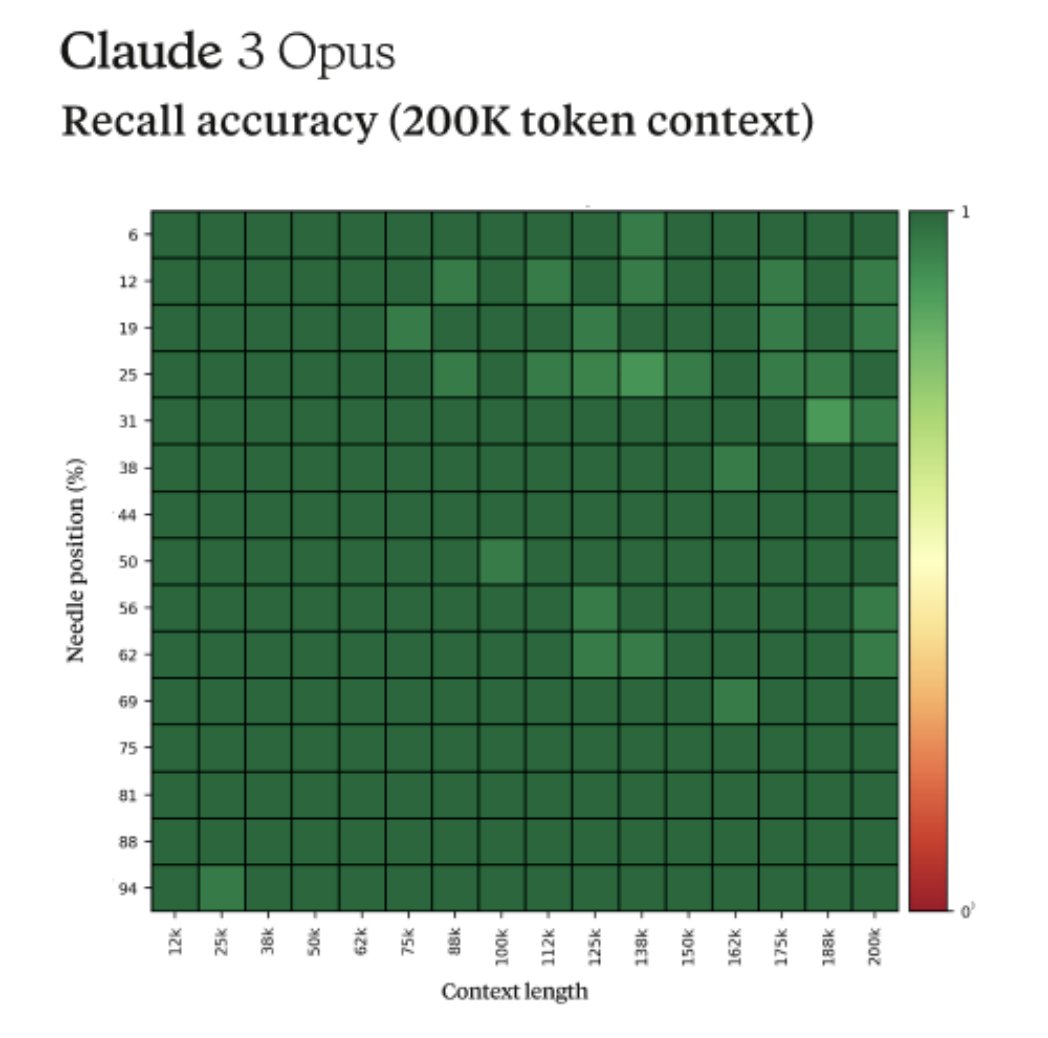

The ExoBrain newsletter this week covers the amazing new capabilities of Claude 3 from Anthropic. We also analyse cost and capability across the mainstream AI model landscape, assessing all the major new entrants… xobrn.co/7dakryex

Wow more 'sparks'; meta-awareness!

A REALLY good take on the implications of long context LLMs and RAG by @jerryjliu0. Plus a reinforcement of @llama_index's mission on building production grade data infrastructure for all LLM applications (not just RAG) 💪🦙🔥 llamaindex.ai/blog/towards-l…

🤷♂️ @rodmuy

175 Followers 1K Following

Sophia-rose Trecarich.. @RoseTrecar83468

79 Followers 5K Following

Sam Cox @iam_samcox

376 Followers 852 Following https://t.co/WlA2Nieojs - happy customers, happy founder! 45% fewer support tickets - get 24/7 answers to your customers; no hidden costs, founder-friendly pricing

Eddie Forson @Ed_Forson

917 Followers 458 Following Builder + AI enthusiast | Currently tinkering with AI Agents here 👉🏿 https://t.co/qYtfsB3sll

Andy Ayrey @AndyAyrey

2K Followers 543 Following trafficker in existential hope • i make websites for space & biotech companies @ https://t.co/MqJ1SS2Xkw • ai adaptation training @ https://t.co/pWNcpCLXdm

Sailesh Kumar @saileshtalks

2K Followers 4K Following Design industry leading semiconductor solutions and help advance the industry. CEO at @bayasystems. ex- Intel Fellow and Founder at NetSpeed Systems.

Mary @Mary01785284725

84 Followers 3K Following

Kamila Fischler @kamila9007

90 Followers 5K Following

Trena Dusen @DuseTren

36 Followers 5K Following

Shara Hosack @hosack_sh

57 Followers 5K Following

Meta @MetaMeowMeow

94 Followers 2K Following

Irmgard Spinka @SpinIrmga

55 Followers 5K Following

Connor Shorten @CShorten30

16K Followers 15K Following Research Scientist @weaviate_io! Mostly working on Generative Feedback Loops with DSPy and Filtered ANN. Host of the Weaviate podcast! DSPy playlist below!

Katrice Halliburton @KatrKatrice

49 Followers 5K Following

Deborah Nastasi @deborah_na97729

69 Followers 5K Following

Pam Dussault @DussaultPa79299

72 Followers 5K Following

Doretha Montee @doreth_mont

86 Followers 5K Following

Iga Legrotte @legro_i

29 Followers 5K Following

Trixie Sullenberger @TSullenber28168

27 Followers 2K Following Trixie - Biggest crypto casino presale👇

Cassandra Sherburne @CassandraS43963

88 Followers 5K Following

Emmanuel Bethoux 🎗.. @EmmanuelBethoux

3K Followers 2K Following #CUEJ86 #ProfDoc certifié - Master Ingénierie Médiation e-Éducation @emmanuelbethoux.bsky.social #emi /RT ne vaut pas approbation/ 💚 🦫🐝💧

Useful Mango @UsefulMango

57 Followers 157 Following

gr @zoneis_poor

137 Followers 2K Following

Ashutosh Mehra @ashutoshmehra

1K Followers 5K Following Senior Principal Scientist at Adobe. Working on Acrobat AI Assistant, LLMs, and document ML.

Simina Hebeisen @SiminaH15919

61 Followers 5K Following

Poppy Freibert @FreiberPop

72 Followers 5K Following

rosemary bornking @RBornking40863

499 Followers 7K Following Am open minded and i love face to face conversation and i do belive in Love at first sight,Looking for such a nice,special,kind,honest,lovely and so caring frie

eng_mo_atia @mohamed187atia

209 Followers 351 Following SOFTWARE ENGINEER 💻 ui/ux designer 📈 https://t.co/ppz9Z2RVkb

ralph 🍥 @ralph_maker

319 Followers 806 Following 👋 Follow and hit the 🔔+ for some alpha. Product+Distribution. ex @airbnb

Irene Khavin @hyphaedelity

58 Followers 137 Following

Mark TargetClassOf95 @TopTierMark

50 Followers 52 Following Stationary and Sporting Goods Department Representative Also sold VCRs at Best Buy. Multi-Discipline Entrepreneur

John Nosta @JohnNosta

79K Followers 54K Following The Cognitive Age—a remarkable leap in human capability and understanding enabled by artificial intelligence.

weber wang @tjxrl2050

87 Followers 794 Following

AI Deeply @AiDeeply

404 Followers 5K Following AI is reshaping the world. Who are the people and companies driving the change? Visit our website to search more than 5,000 profiles.

David A @DA_83

36 Followers 915 Following

James Barnes @jimmyeatcarbs

2K Followers 3K Following Worked at FB, where I helped elect Trump, built the elections War Room, and quit to help defeat him. Now a fellow traveler building a better mirror.

Subbarao Kambhampati .. @rao2z

16K Followers 29 Following AI researcher & teacher @SCAI_ASU. Works on Human-Aware AI. Former President of @RealAAAI; Chair of @AAAS Sec T. Here to tweach #AI. YouTube Ch: https://t.co/4beUPOmMW6

cocktail peanut @cocktailpeanut

12K Followers 12 Following creator: https://t.co/eLmdCss3Ln & https://t.co/5dfwDuLJXV

Solitude @SolitudeAgents

34 Followers 110 Following A marketplace to discover AI Agents that detect and automate complex workflows for you.

Andy Ayrey @AndyAyrey

2K Followers 543 Following trafficker in existential hope • i make websites for space & biotech companies @ https://t.co/MqJ1SS2Xkw • ai adaptation training @ https://t.co/pWNcpCLXdm

Limitless @LimitlessAI

3K Followers 0 Following Go beyond your mind's limitations. Personalized AI powered by what you've seen, said, and heard. Founders: @dsiroker, @brettbejcek, and @stammy

Connor Shorten @CShorten30

16K Followers 15K Following Research Scientist @weaviate_io! Mostly working on Generative Feedback Loops with DSPy and Filtered ANN. Host of the Weaviate podcast! DSPy playlist below!

Philip Goff @Philip_Goff

30K Followers 2K Following Philosophy Professor at Durham Uni. Author of "Why? The Purpose of the Universe" & "Galileo's Error." "One of the most persuasive panpsychists" - Stephen Fry.

Paul Scharre @paul_scharre

16K Followers 695 Following Executive Vice President and Director of Studies at CNAS. Author of "Four Battlegrounds: Power in the Age of Artificial Intelligence."

LM Studio @LMStudioAI

16K Followers 186 Following Download & run local/open LLMs on your computer 👾 App: https://t.co/YS5uiRQ7TI (Mac/Windows/Linux)

Dheeraj Paramesha Cha.. @pc_dheeraj

532 Followers 1K Following Researching Strategic Intelligence for Counterterrorism, Military Ops & FP; Cold War Int. history

Maziyar PANAHI @MaziyarPanahi

2K Followers 469 Following Principal AI/ML/Data Engineer @CNRS @ISCPIF | Spark NLP Lead | https://t.co/6r6GnF0GiY ❤️ #opensource

udio @udiomusic

28K Followers 0 Following

Pliny the Prompter �.. @elder_plinius

12K Followers 1K Following latent space liberator, breaker of markov chains, 1337 ai red teamer, white hat, architect-healer, cogsci 🐻

Eric Steinberger @EricSteinb

7K Followers 478 Following Writing code that writes code on a mission to build safe superintelligence | CEO/cofounder @magicailabs

Chief AI Officer @chiefaioffice

20K Followers 627 Following Writing a daily report on VC activity in AI for 5000+ investors, founders → https://t.co/guDR0d4IA7 // By @IamTalin

Maisa @maisaAI_

3K Followers 3 Following Maisa abstracts the complexities of AI development. Powered by KPU, the most advanced reasoning system for LLMs that overcomes their intrinsic limitations.

Nous Research @NousResearch

18K Followers 29 Following The AI Accelerator Company. https://t.co/vrD0aDJeto

Zachary Nado @zacharynado

5K Followers 648 Following Research engineer @googlebrain. Past: software intern @SpaceX, ugrad researcher in @tserre lab @BrownUniversity. All opinions my own.

Aaron Defazio @aaron_defazio

6K Followers 365 Following Research Scientist at Meta working on optimization. Fundamental AI Research (FAIR) team

Ari Morcos @arimorcos

6K Followers 2K Following CEO and Co-founder @datologyai working to make it easy for anyone to make the most of their data. Former: RS @AIatMeta (FAIR), RS @DeepMind, PhD @PiN_Harvard.

Sholto Douglas @_sholtodouglas

15K Followers 858 Following Scaling Gemini @Deepmind - working towards intelligence too cheap to meter

Trenton Bricken @TrentonBricken

7K Followers 2K Following Trying to figure out what makes minds and machines go "Beep Bop!" @AnthropicAI

latent space princeps @lumpenspace

3K Followers 481 Following ruthless osmopolitan, autist suprematist, ai influencee. 🕰️🌍🔜🪦;🌑🤼🤰🏻;👇⏱️|🐙,…| likes and retweets are pledges of undying loyalty.

Jan Leike @janleike

44K Followers 322 Following ML Researcher, co-leading Superalignment @OpenAI. Optimizing for a post-AGI future where humanity flourishes.

dan @irl_danB

1K Followers 915 Following building something new, using community curation plus LLMs to help make sense of the world DM for beta invite or sign up here: https://t.co/a86bBAfLG1

tel∅s @AlkahestMu

1K Followers 1K Following ⌥ universal teleological catalyst ⌥ Panvirtualization protocol ⌥ Weaver complextropy engine ⌥ inframodel augur ⌥ do not fear the multiverse ahead; wonder at it

mephistoooOOHHHHHHSHI.. @karan4d

12K Followers 2K Following 𝒕𝘩𝘦 𝘴𝘪𝘮𝘶𝘭𝘢𝘵𝘰𝘳 𝘪𝘴 𝘢 𝘤𝘳𝘶𝘤𝘪𝘣𝘭𝘦 𝘧𝘰𝘳 𝘵𝘳𝘢𝘯𝘴𝘮𝘶𝘵𝘢𝘵𝘪𝘰𝘯 @NousResearch

ralph 🍥 @ralph_maker

319 Followers 806 Following 👋 Follow and hit the 🔔+ for some alpha. Product+Distribution. ex @airbnb

Saurabh Srivastava @_saurabh

832 Followers 376 Following Research in reasoning for better program synthesis (PhD, Postdoc, YC)

Joelle Pineau @jpineau1

10K Followers 352 Following AI researcher. VP AI Research (FAIR), @AIatMeta. Professor of Computer Science, @mcgillu. Core academic member, @Mila_Quebec

Daniel Han @danielhanchen

7K Followers 941 Following Building @UnslothAI. Finetune LLMs 30x faster https://t.co/aRyAAgKOR7. Prev ML at NVIDIA. Hyperlearn used by NASA. I like maths, making code go fast

Murray Shanahan @mpshanahan

16K Followers 314 Following Professor at Imperial College London and Principal Scientist at Google DeepMind. Tweeting in a personal capacity. To send me a message please use email

Jeff Clune @jeffclune

23K Followers 409 Following Professor, CS, U. British Columbia. CIFAR AI Chair, Vector Institute. Sr. Advisor, DeepMind | ML, AI, deep RL, deep learning, AI-Generating Algorithms (AI-GAs)

Neal Wu @WuNeal

15K Followers 391 Following Building @cognition_labs. Previously @tryramp, @GoogleBrain, @Harvard, competitive programming (featured in @Wired). Created https://t.co/pihw5AGvbV.

xAI @xai

997K Followers 36 Following

Elon Musk @elonmusk

181.7M Followers 585 Following

Moritz Kremb @moritzkremb

60K Followers 364 Following Helping you leverage AI to grow your business | Founder of https://t.co/m4IXbMyyHJ | Join my free newsletter to get the latest AI guides & tutorials 👇

OpenRouter @OpenRouterAI

5K Followers 82 Following A router for LLMs. 120+ models, explorable data, and open-source inference. https://t.co/ZR8gPNSd52