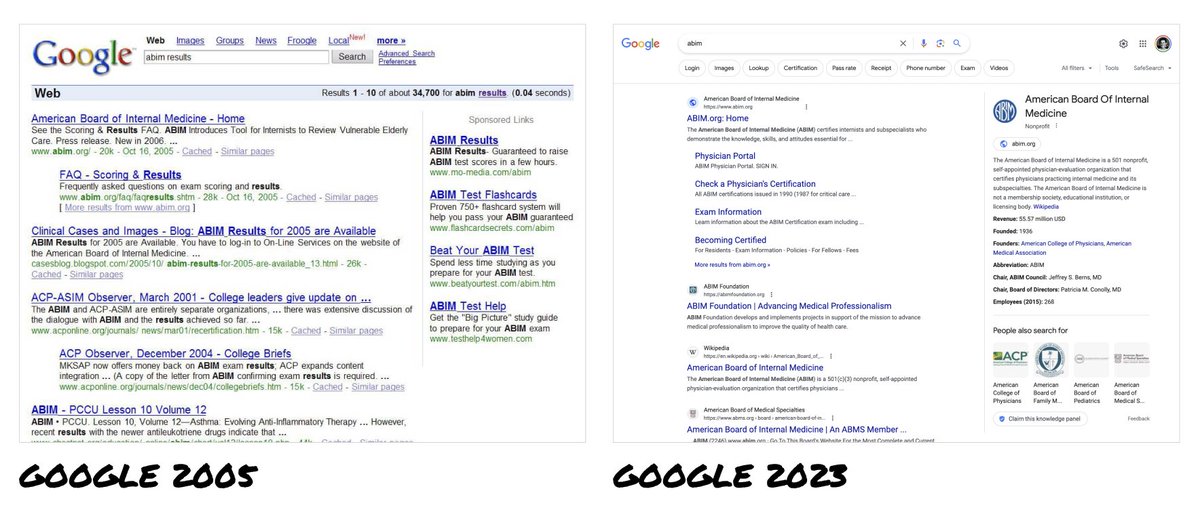

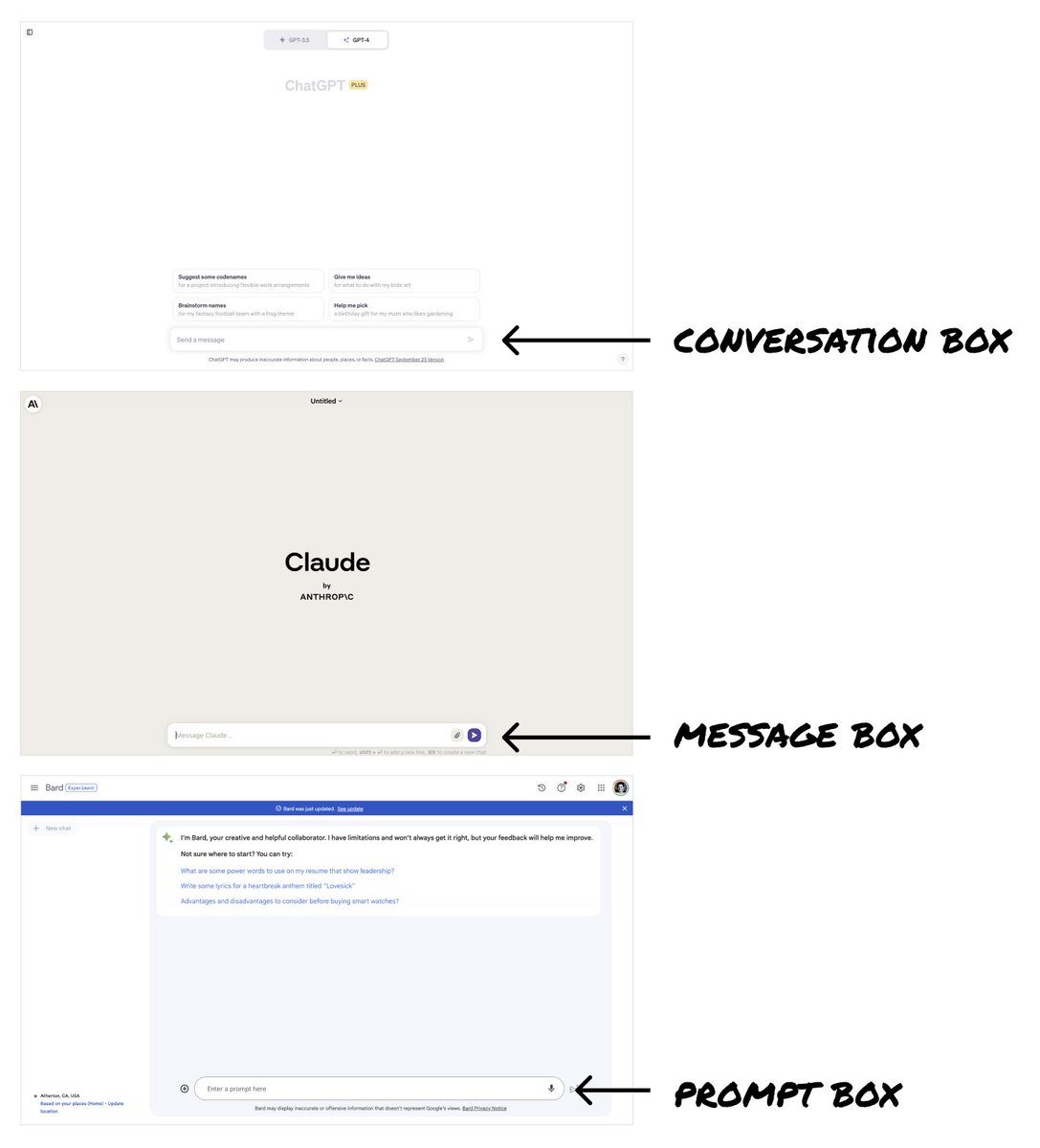

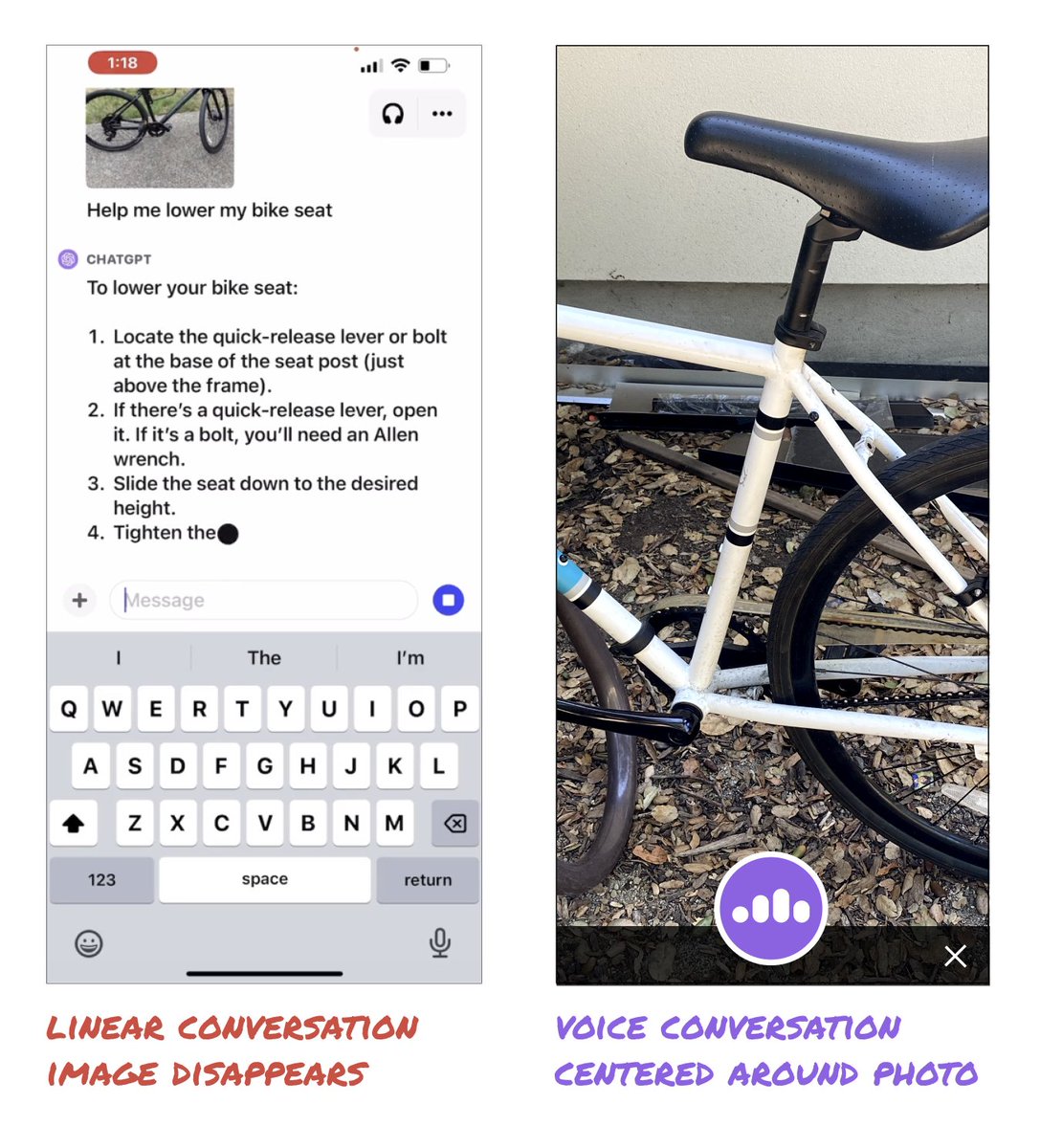

In 2006, I was 1 of 4 designers on Google Search. For 20 years, every search engine has copied Google. Now ChatGPT, Bard + Claude look like Google's offspring - "better” search engines. But last week signaled we're on the brink of a design revolution. ChatGPT unveiled incredible new features. These could give us the opportunity to completely shift how we interface with AI. Here's the full story: ––– When I was a designer on Google Search, all major search engines looked the same – Google, Yahoo, MSN Bing. Google was the market leader with a heavily optimized UI that supported billions of dollars in ad revenue. Naturally, it became THE way to show search results. Its success made it illogical for Google to consider big UI changes. And any changes they did make were just mirrored by everyone else. So 20 years later, we’ve only seen incremental changes to search engine UIs. ––– Today, we have consumer-ready LLMs (Large Language Models) freshly in our hands. As consumer products, these are in their infancy. We’re very early in understanding their capabilities and defining how people interact with them. These are uncharted waters. And yet ChatGPT, Bard, Claude etc. all chose a text-based input box — just like Google’s search box — as the core interface. Why? The input box is simple, versatile, and familiar. - It’s simple to understand → you type your questions into the box. - It’s versatile → the box can handle all sorts of questions/queries. - The paradigm is super familiar → people immediately know how to use it. Because of this, LLMs have essentially become “a better Google.” ––– But last week’s ChatGPT announcements thrust open the doors to new possibilities. ChatGPT is now multi-modal — it can see, hear, and speak. These are the recent announcements from @OpenAI : Voice: Photos: The example of ChatGPT explaining how to lower a bike seat was incredible. But, it could be so much better! The video showed you'll have to post multiple new photos to keep adding new information and to progress the conversation. It was still a linear conversation centered around the text box. But what if we rethought the interface to center around the image? What if ChatGPT supported both images AND voice simultaneously? Could we end up with a more immersive experience? ––– How else could interacting with LLMs mimic IRL conversations? Could we (or the AI) pinch to zoom or rotate the image? Could we interact in real time with video? What new possibilities open up with context being preserved over time? ––– There is so much energy and excitement around what AI can do. But we are limiting the potential by assuming the conversation box is the best interface. Right now, designers have the chance to create truly novel interactions and bust through the 20+ year old search UI paradigm. The ideas above are just to illustrate some potential options. But they are also intended to spark a flame. Now is the opportunity to be creative and explore divergent UIs. What are the craziest, coolest, most creative UI ideas we can unleash? LFG 🚀

@elizlaraki How about having AI manipulate your bike image in real time, demonstrating where to make the adjustments and what the result looks like AND narrate the process using generative voice.

@elizlaraki This scene from Star Trek TNG with Geordi on the Holodeck does a great job of taking what you wrote here and projecting what an immersive 3D experience could be: youtube.com/watch?v=vaUuE5…

@elizlaraki No offense, but why the hell were there 4(!) designers for google search? What did you guys do all days? :)

@elizlaraki Interacting with video would be so great. Imagine cooking and ChatGPT directly assisting you or repairing something or literally anything else